Description

Graph signal processing (GSP) [1-3] and graph machine learning (GML) [4-6] are research fields that aim to generalize classical concepts from signal processing and machine learning to data defined on graphs. In GSP, classical operations such as filtering, sampling, and reconstruction are extended to graph signals, i.e., functions defined over the vertices of a graph [7,8]. In GML, neural network architectures are adapted to graph-structured data, leading to models such as graph neural networks (GNNs) [9] and message passing neural networks (MPNNs) [10]. GSP and GML are crucial because graph-structured data appear in numerous applications, including social networks [3], the web [11], recommender systems [12], biological networks [13], and knowledge graphs [14], among others. A common challenge across these domains is the massive scale of the underlying graphs, which often contain millions or even billions of nodes and edges [15]. Therefore, it becomes essential to compress the signals defined on it, which also enables efficient learning and processing on the compressed representations.

In the GSP literature, a large body of work has addressed the problems of sampling and reconstruction of graph signals in a principled manner [7,16-18]. These works provide theoretical guarantees for reconstructing graph signals from samples based on spectral properties of the graph, guiding the design of optimal or near-optimal sampling sets [8,19,20]. However, these methods assume the original graph is not modified and consider only the problem of sampling graph signals. In parallel, the GML community has developed principled graph coarsening methods [21] and, more recently, graph coarsening techniques with message-passing guarantees [22,23]. Yet, the associated reduction/lifting and propagation operators are challenging to optimize in practice, which limits scalability.

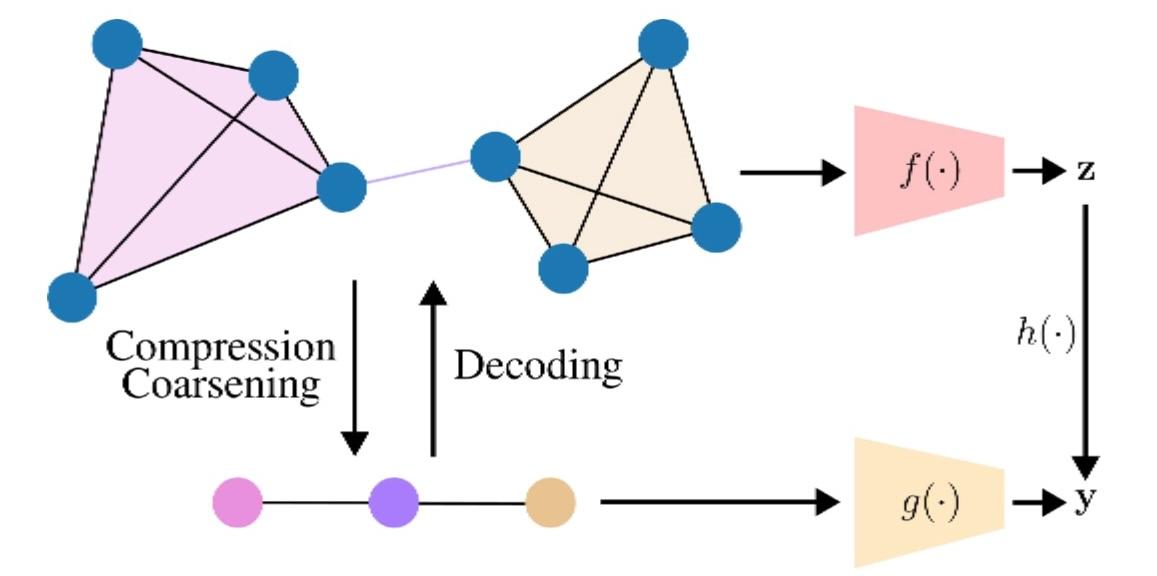

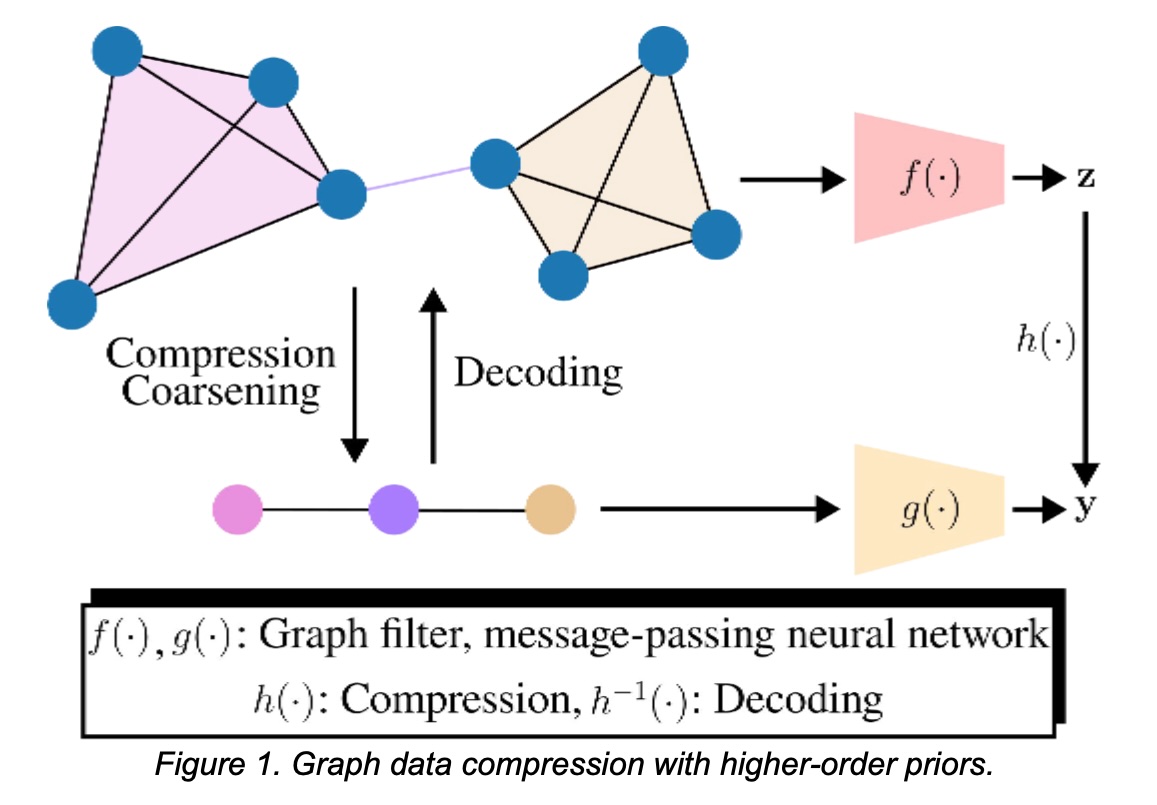

Establishing a principled framework for jointly compressing graph signals and topology with (i) decoding guarantees and (ii) task-level guarantees on the compressed topology remains an open challenge. We address this by assuming access to higher-order structures (e.g., cliques/motifs) that induces an aggregation operator and a compact graph as shown in Figure 1. This prior will let us (i) compress signals and decode them with some guarantees, and (ii) run GNNs directly on the compressed representation while preserving downstream performance. Unlike successive local aggregations [18], which form measurements by repeatedly applying a graph shift function while keeping the topology, we assume a higher-order prior, like cliques, that induces the compression operator and a compressed topology on which learning proceeds. Similarly, in contrast to the graph coarsening with message-passing guarantees method that constructs reduction/lifting operators [22,23], our higher-order prior provides the aggregation map, simplifying operator design while still enabling guarantees for GNN/MPNN propagation. A key question we study is: When are such higher-order priors informative and practically useful? We target regimes where cliques/motifs are common (e.g., community-like substructures), so that aggregation captures smooth variations in the clique/motif and preserves task performance. This problem has broad applications, ranging from graph data compression and storage to efficient processing of relational datasets in domains such as social networks, the web, recommender systems, and biological systems.

Bibliography

Bibliographie

[1] D. I. Shuman et al., “The emerging field of signal processing on graphs: Extending high- dimensional data analysis to networks and other irregular domains,” IEEE Signal Processing Magazine, vol. 30, no. 3, pp. 83–98, 2013.

[2] A. Ortega et al., “Graph signal processing: Overview, challenges, and applications,” Proceedings of the IEEE, vol. 106, no. 5, pp. 808–828, 2018.

[3] A. Ortega, Introduction to Graph Signal Processing. Cambridge University Press, 2022.

[4] M. M. Bronstein et al., “Geometric deep learning: going beyond Euclidean data,” IEEE Signal Processing Magazine, vol. 34, no. 4, pp. 18–42, 2017.

[5] Z. Wu, S. Pan, F. Chen, G. Long, C. Zhang, and P. S. Yu, “A comprehensive survey on graph neural networks,” IEEE Transactions on Neural Networks and Learning Systems, vol. 32, no. 1, pp. 4–24, 2021.

[6] C. Morris, F. Frasca, N. Dym, H. Maron, I. I. Ceylan, R. Levie, D. Lim, M. M. Bronstein, M. Grohe, and S. Jegelka, “Position: Future directions in the theory of graph machine learning,” in Forty-first International Conference on Machine Learning, 2024.

[7] S. Chen et al., “Discrete signal processing on graphs: Sampling theory,” IEEE Transactions on Signal Processing, vol. 63, no. 24, pp. 6510–6523, 2015.

[8] A. Anis, A. Gadde, and A. Ortega, “Efficient sampling set selection for bandlimited graph signals using graph spectral proxies,” IEEE Transactions on Signal Processing, vol. 64, no. 14, pp. 3775–3789, 2016.

[9] T. N. Kipf and M. Welling, “Semi-supervised classification with graph convolutional networks,” in International Conference on Learning Representations (ICLR), 2017.

[10] J. Gilmer et al., “Neural message passing for quantum chemistry,” in International Conference on Machine Learning (ICML), vol. 70, 2017, pp. 1263–1272.

[11] G. Wang, J. Yu, M. Nguyen, Y. Zhang, S. Yongchareon, and Y. Han, “Motif-based graph attentional neural network for web service recommendation,” Knowledge-Based Systems, vol. 269, p. 110512, 2023.

[12] X. Li, L. Sun, M. Ling, and Y. Peng, “A survey of graph neural network based recommendation in social networks,” Neurocomputing, vol. 549, p. 126441, 2023.

[13] R. Li, X. Yuan, M. Radfar, P. Marendy, W. Ni, T. J. O’Brien, and P. M. Casillas-Espinosa, “Graph signal processing, graph neural network and graph learning on biological data: a systematic review,” IEEE Reviews in Biomedical Engineering, vol. 16, pp. 109–135, 2021.

[14] Z. Ye, Y. J. Kumar, G. O. Sing, F. Song, and J. Wang, “A comprehensive survey of graph neural networks for knowledge graphs,” IEEE Access, vol. 10, pp. 75 729–75 741, 2022.

[15] J. Leskovec and A. Krevl, “SNAP Datasets: Stanford large network dataset collection,” http://snap.stanford.edu/data, June 2014.

[16] I. Pesenson, “Sampling in paley–wiener spaces on combinatorial graphs,” Transactions of the American Mathematical Society, vol. 360, no. 10, pp. 5603–5627, 2008.

[17] M. Tsitsvero, S. Barbarossa, and P. D. Lorenzo, “Signals on graphs: Uncertainty principle and sampling,” IEEE Transactions on Signal Processing, vol. 64, no. 18, pp. 4845–4860, 2016.

[18] A. G. Marques, S. Segarra, G. Leus, and A. Ribeiro, “Sampling of graph signals with successive local aggregations,” IEEE Transactions on Signal Processing, vol. 64, no. 7, pp. 1832–1843, 2016.

[19] G. Puy, N. Tremblay, R. Gribonval, and P. Vandergheynst, “Random sampling of bandlimited signals on graphs,” Applied and Computational Harmonic Analysis, vol. 44, no. 2, pp. 446–475, 2018.

[20] Y. Tanaka, Y. C. Eldar, A. Ortega, and G. Cheung, “Sampling signals on graphs: From theory to applications,” IEEE Signal Processing Magazine, vol. 37, no. 6, pp. 14–30, 2020.

[21] A. Loukas, “Graph reduction with spectral and cut guarantees,” Journal of Machine Learning Research, vol. 20, no. 116, pp. 1–42, 2019.

[22] A. Joly and N. Keriven, “Graph coarsening with message-passing guarantees,” Advances in Neural Information Processing Systems, vol. 37, pp. 114 902–114 927, 2024.

[23] A. Joly, N. Keriven, and A. Roumy, “Taxonomy of reduction matrices for graph coarsening,” in NeurIPS, 2025.